Apache Kafka® Support

Secure the Data Pipeline with Field Level Encryption and Tokenization

Apache Kafka® Encryption

Apache Kafka is a powerful platform for streaming big data in real time for multiple providers and use cases, including data analysis downstream. Thousands of enterprises rely on Apache Kafka, as an open-source distributed event streaming platform, for high-performance data pipelines, streaming analytics, and data integration. It is often used to move data from a traditional database or file storage into a modern analytic data warehouse like Snowflake® or Amazon Redshift or Apache Pinot™.

However, putting sensitive data in the clear can create massive security and compliance issues. The challenge is that legacy data encryption methods are not sufficient as they do not allow control over who can see what data and can also impede downstream data analytics and third party applications.

Baffle® Data Protection Services (DPS) Transform for Kafka is a purpose built software solution designed to simplify end-to-end security of the modern data pipeline.

Baffle DPS allows you to deploy a transparent data security mesh that encrypts or tokenizes sensitive data while it is being moved from a traditional database to a modern analytic data warehouse. This data-centric security approach ensures that no cleartext data is exposed in the analytics pipeline preventing the possibility of sensitive data from being stolen. With Baffle DPS, Kafka can implement functionality that provides end to end encryption of messages - with the consumer only being able to decrypt the Kafka topic from the Kafka broker if they have been granted the proper access.

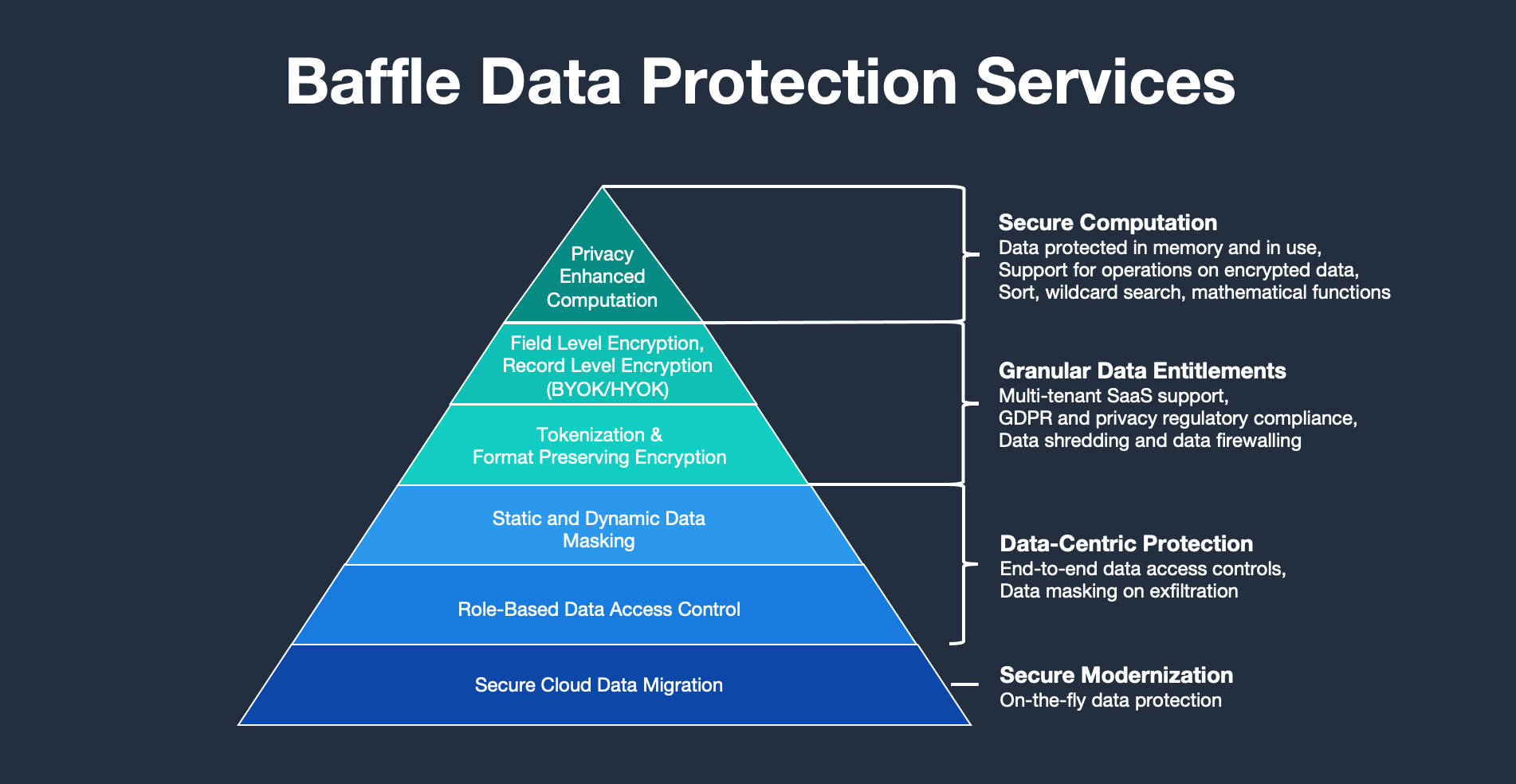

Baffle Data Protection Services

Baffle’s solution simplifies protection of your data in the cloud without requiring any application code modification or embedded SDKs.

How Baffle for Kafka Works

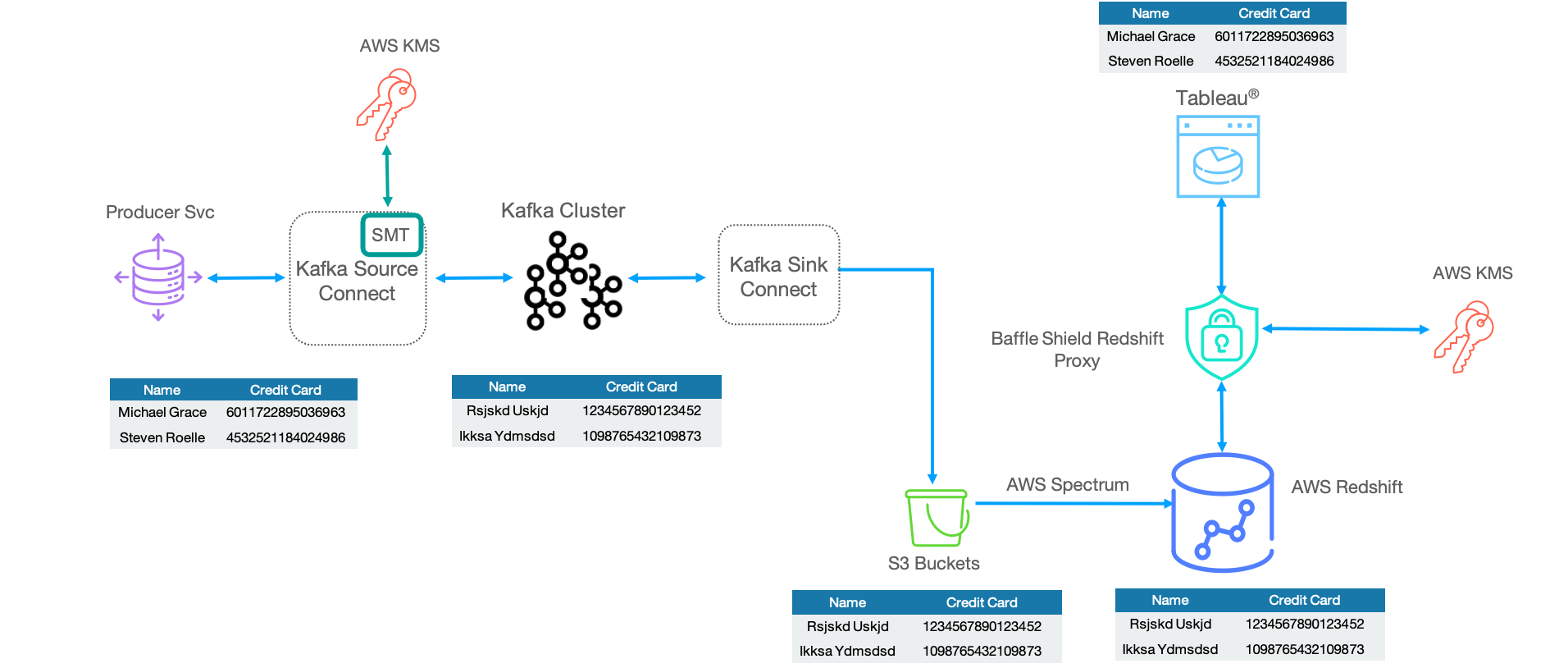

Kafka Connect ingests data into a Kafka Cluster from traditional database and dumps the data into modern analytic data warehouses. Kafka Connect enables connectivity between a source data store and a Kafka cluster or between a Kafka cluster and a destination data store.

The Simple Message Transform (SMT) capability allows a custom transformation engine to be plugged into a Kafka connector to transform data from its original value as it is being ingressed/egressed from Kafka. The Baffle Transform using SMT provides a way to encrypt or tokenize the Kafka stream, or sensitive data, while it is being moved from a traditional database to a modern analytic data warehouse. This data-centric security approach ensures that no cleartext data is exposed in the analytics pipeline preventing the possibility of sensitive data from being stolen.

Data is transported from a data source to the Confluent Kafka cluster through Kafka Connect where the Baffle Transform plug-in tokenizes sensitive data at a field level granularity.

For consumption, the encrypted data is transported from a Confluent Kafka cluster to a data store through Kafka Connect where the Baffle Transform plug-in restores sensitive data at a field level granularity with de-tokenization or decryption to its original values.

The Baffle Single Message Transform can be integrated with:

- Kafka Connect

All Sink and Source Connectors are supported:

- Sources and Sinks: Reads and writes data to the Confluent Platform (using the consumer/producer API)

Baffle SMT is compatible with the following:

- Confluent 5.0.X and above

- Kafka 2.0.x and above

- Kafka Connect 5.0.X and above

Our Solution

Baffle delivers an enterprise-level transparent data security platform that secures databases via a "no code" model at the field or file level. The solution supports tokenization, format-preserving encryption (FPE), database and file AES-256 encryption, and role-based access control. As a transparent solution, cloud-native services are easily supported with almost no performance or functionality impact.

Easy

No application code modification required

Fast

Deploy in hours

not weeks

Powerful

No impact to user

experience

Flexible

Bring your own key

Secure

AES cryptographic

protection

Schedule a live demo with one of our solutions experts to get answers to your questions